Aaron W. Calhoun 1, Asit Misra 2, Rami A. Ahmed 3

[1] Department of Pediatrics,

[2] Department of Surgery, Division of Emergency Medicine,

[3] Department of Emergency Medicine, Division of Simulation,

Article Type: Editorial

It was not so long ago that simulation was considered a ‘niche’ field. When medical education was brought up most minds turned, almost reflexively, to didactic or beside training. In those days, it seemed anything reasonable was on the table. Little to no formal training existed, and simulation research largely focused on questions of viability and comparability to the tried and true. Those days, however, are no more. In the few short decades, our specialty has grown from childhood, through the pre-teen phase, and into its current late adolescence, a period marked by increasing formalization, the ubiquity of use and ever-deepening interactions with other technological developments such as 3D printing, gamification, virtual & mixed reality and generative artificial intelligence [1–3]. Rapid growth trajectories such as this can be exciting, but at such times it can be valuable to consider not just where we are going, but where we should be going. With this goal in mind, we suggest the following as high-yield paths.

Much of the history of simulation has been one of increased accessibility. The technology has evolved from bulky, immobile mannequins that could only be housed in a brick-and-mortar simulation center to lighter, more mobile units that can be deployed in situ throughout a health system [4,5]. This trend towards accessibility has even enabled the effective deployment of simulation-based interventions in resource-poor settings [6]. Given the significant cost, however, this ‘democratization’ has yet to occur with virtual reality (VR).

Unlike standard mannequins, which typically have relatively accessible operating systems, it is simply not possible for the average simulationist to develop and implement their own VR cases due to a lack of expertise in the artistic and programming skills required, adding significant cost as cases must, therefore, be purchased from the corporation providing the VR system. However, the advent of large language models (such as ChatGPT) offers a means of altering this landscape, as they have already proven facile at creating game scripting programmes in common languages [7]. The development of such a system would place the ability to create, disseminate and implement VR cases essentially back into the hands of the simulationist.

A second path involves a concerted effort to expand the use of simulation-based testing and research methodologies across the medical community. While our field may have begun primarily to provide immersive education, its potential applications far exceed this. Indeed, two of the most potent uses of simulation are for the real-time detection of latent safety threats within a health system (a use that leverages in situ approaches to simulation in order to assess care practices as they occur within the actual care environment) and as a controlled setting in which to test various non-simulation-related interventions [8–11]. In both of these use cases, the potential value of simulation lies in its ability to recreate almost any clinical scenario in a reproducible, standardized manner. This ability is well-known within our community of practice, but in my interactions with other fields of medicine, this is not well understood. Innovating in this area will require some degree of deliberate ‘infiltration’ of the simulation sections and special interest groups of national and international medical organizations, providing us with the needed leverage to suggest this use of simulation as a solution to problems as they arise. More widespread use of simulation in this way would also assist our own community of practice by providing a wider platform for ongoing programme evaluation and acquisition of the higher-level outcomes data (i.e. Kirkpatrick Level 4) needed to demonstrate return on investment and sustainability.

A final path concerns how we maintain and synthesize the information present within the scholarly foundations of our field. Over the past 15 years, the Society for Simulation in Healthcare (SSH) has conducted three research summits, each charged with synthesizing what is currently known, gathering input from beyond the field and charting a path forward in terms of both research goals and practical guidelines [12–14]. As the amount of available literature expands in both volume and methodological diversity, it is becoming apparent that a less episodic, more comprehensive approach may be needed. Such an approach would involve a continuous review of new literature as it is published, generating syntheses and guidelines on an ongoing basis as consensus develops. An explicitly inclusive approach to both quantitative and qualitative methodologies is also required, as both approaches hold meaningful insights for practice. One potential model for a process such as this is the International Liaison Committee on Resuscitation (ILCOR), which conducts ongoing reviews of key aspects of the resuscitation literature with the goal of producing and revising guidelines in real time as new insights are obtained [15].

Our field is experiencing unprecedented growth in terms of technology and scope. As we move forward, we must consider each opportunity to direct this growth in ways that increase our ability to make a difference in the lives of the patients we care for. We hope the above suggestions will spark interest among the readers in doing just that in new and creative ways.

1.

2.

3.

4.

5.

6.

7.

8.

9.

10.

11.

12.

13.

14.

15.

Thom O’Neill 1, 2, Andrew Merriman 1, 3, Sara Robinson 1, 4

[1]

[2]

[3]

[4]

It’s widely recognized that there is a growing need for solutions to address microaggressions (Figure 1) and discrimination in healthcare environments, with approaches being developed at both undergraduate [1] and postgraduate [2] levels. Active Bystander Training (ABT) has been used successfully across military, higher education and government workplaces but despite this remains an under-utilized resource for tackling workplace microaggressions and discrimination in the NHS [3].

Microaggression definition.

Whilst some approaches to training remain in the realms of didactic sessions and facilitated discussions [1], we are starting to see simulation-based medical education (SBME) incorporate microaggressions into clinical scenarios [2]. This is a positive step forward; however, additional consideration must be given to the psychological safety of participants and faculty when scenario content may prove emotionally distressing or harmful. Successful reports of incorporating microaggressions into blinded immersive simulation scenarios are caveated with reports of faculty feeling emotionally uncomfortable [4], and mitigating the psychological threat to simulated patients from diverse communities is a challenge [5].

We believe that incorporating incidents of microaggressions into blinded immersive simulation carries a risk to psychological safety to all involved, and particularly so to participants (including faculty and actors) with protected characteristics.

Locally, common feedback from staff following the existing ABT was that confidence to actively intervene in situations was affected by difficulties in word-choice and phraseology preferences. We have, therefore, designed an innovative ‘Beyond Bystander’ workshop to follow on from knowledge-based training to enable open exploration and, crucially, rehearsal of bystander interventions in a psychologically safe environment.

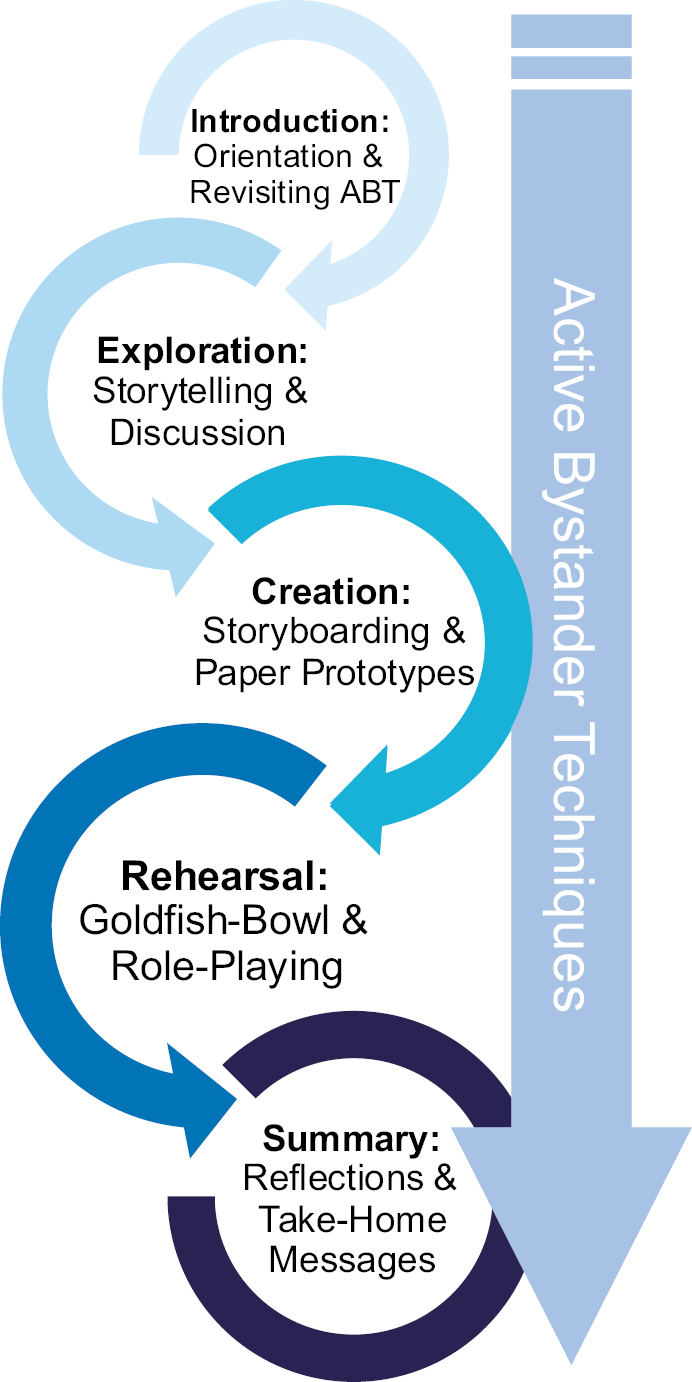

Our approach uses a helical structure, stacking simulation pedagogies with each phase building on the previous (Figure 2) alongside consistent reference to Active Bystander techniques. Trained simulation facilitators – themselves with protected characteristics and lived experiences – guide participants through the workshop.

The Beyond Bystander Workshop helical design.

We give an overview of ABT and introduce the workshop content. Importantly, we have incorporated a ‘distress protocol’ into our design which guides facilitators to best support participants, and this is highlighted in the orientation alongside focused ‘ground rules’.

Using narrative pedagogy, participants are invited to share experiences of either witnessing microaggressions in the workplace or opportunities for bystander interventions (successful or otherwise) to encourage discussion and reflection in the context of active bystander techniques.

The workshop then builds from storytelling to storyboarding, using creation to explore individual word-choice and phraseology preferences. Groups are tasked with designing and scripting simulation scenario vignettes involving examples of microaggressions across different themes. By choosing the microaggression examples themselves, often drawn from the storytelling phase, participants can safely explore themes and approaches without artefact or surprise.

The final stage builds to use a hybrid role-play simulation adopting a Goldfish-bowl technique to simulate witnessing microaggressions in the clinical environment. Pre-recorded scenario vignettes, each displaying an occurrence of a microaggression, are played to groups utilizing the same scenario format as used in the storyboarding phase. The vignettes each include a character in a position of observer who has the potential to become an active bystander. The vignettes cut off when intervention may be indicated, and the groups are prompted to assume the characters and role-play interactions to explore their individual word-choice and phraseology preferences. The content of each vignette is pre-briefed, and facilitators support each group to monitor psychological safety.

We conclude the Beyond Bystander workshop with a summary of discoveries and take-home messages, as well as highlighting keywords and phrases shared by the role-playing groups that were particularly helpful.

The workshop was evaluated using a combination of pre- and post-session feedback questionnaires and a group interview immediately after the workshop was completed. The questionnaire gathered data on participants’ knowledge of bystander interventions and their confidence in both knowing what to say and actively intervening if they witnessed a microaggression. The post-session questionnaire and group interview also specifically asked about psychological safety, as well as session mechanics and structure.

Four participants attended a proof-of-concept workshop. Evaluation data demonstrated that the workshop increased participants’ knowledge of bystander interventions and their confidence in the words they would use when acting as a bystander. All participants strongly agreed that their psychological safety was protected during the workshop, and they felt comfortable sharing their experiences of microaggressions.

Based on our preliminary feedback, we aim to study our workshop design more comprehensively with larger participant groups from a diverse set of professional roles. Additional longitudinal research will be conducted to explore participants’ experiences of utilising bystander techniques and reflections on whether the workshop encouraged or facilitated these.

We believe our helical design of stacking simulation pedagogy to preserve psychological safety when addressing difficult subjects is transferrable to similar themes. We already plan to adopt the design to address incivility interventions, and there are potential uses for exploring male allyship as part of an approach to tackling sexual misconduct in the workplace.

Active Bystander Intervention: Training and Facilitation Guide Copyright © 2021 by Sexual Violence Training Development Team (https://opentextbc.ca/svmbystander/) is licensed under a Creative Commons Attribution 4.0 International License, except where otherwise noted.

None declared.

None declared.

None declared.

None declared.

None declared.

1.

2.

3.

4.

5.

Adam Bonfield 1, Elena Dickens 2, Charlotte Grantham 3, Darcy Fidoe 3, Mary Mushambi 4

[1]

[2]

[3] Department of Clinical Education,

[4]

The National Health Service (NHS) is undergoing a radical digital transformation to update systems and support long-term sustainability. One of the government’s priorities is to ensure that all trusts have electronic patient records (EPRs) by March 2025 [1]. Despite this, simulation training has been slow to adopt such systems, often relying on dated paper charts and referral forms [2]. This affects the psychological fidelity and immersion of the learning experience, meaning our simulation exercises are increasingly unrepresentative of clinical practice. It is, therefore, important to incorporate technologies from clinical settings into simulation training to better prepare students and staff [3]. However, this has been impeded by a lack of bespoke EPR training software [4].

At a UK-based university, final-year medical students undertake ‘WardSim’, a fully simulated ward comprising 3 clinical teams and 22 patient scenarios [5]. These scenarios include simulated patients, manikins and task trainers. The aim of this is to enable students to practise clinical and non-technical skills such as task prioritization, team working, communication and escalation in preparation for foundation training. We developed a customizable simulated EPR in collaboration with Nervecentre to embed into ‘WardSim’. The simulated EPR was based on the live Nervecentre, which is used clinically by the local NHS trust. Features include observations, electronic prescribing and the facility to write discharge summaries. Investigation results were uploaded as PDF images onto the patient record.

Prior to ‘WardSim’, students familiarized themselves with Nervecentre by reading the user guide, completing an e-learning module and practising on clinical placements.

A mixed methodology was used to measure the impact of embedding Nervecentre into ‘WardSim’ through the completion of online pre- and post-session surveys. Using a 5-point Likert Scale (5 = high confidence, 1 = low confidence), the students’ self-perceived confidence was assessed in eight areas. Qualitative data were collected through free-text responses, and an inductive thematic analysis was conducted to identify themes. Faculty members gave feedback focused on their views of Nervecentre in terms of its ease of use, educational benefit and student engagement with the software.

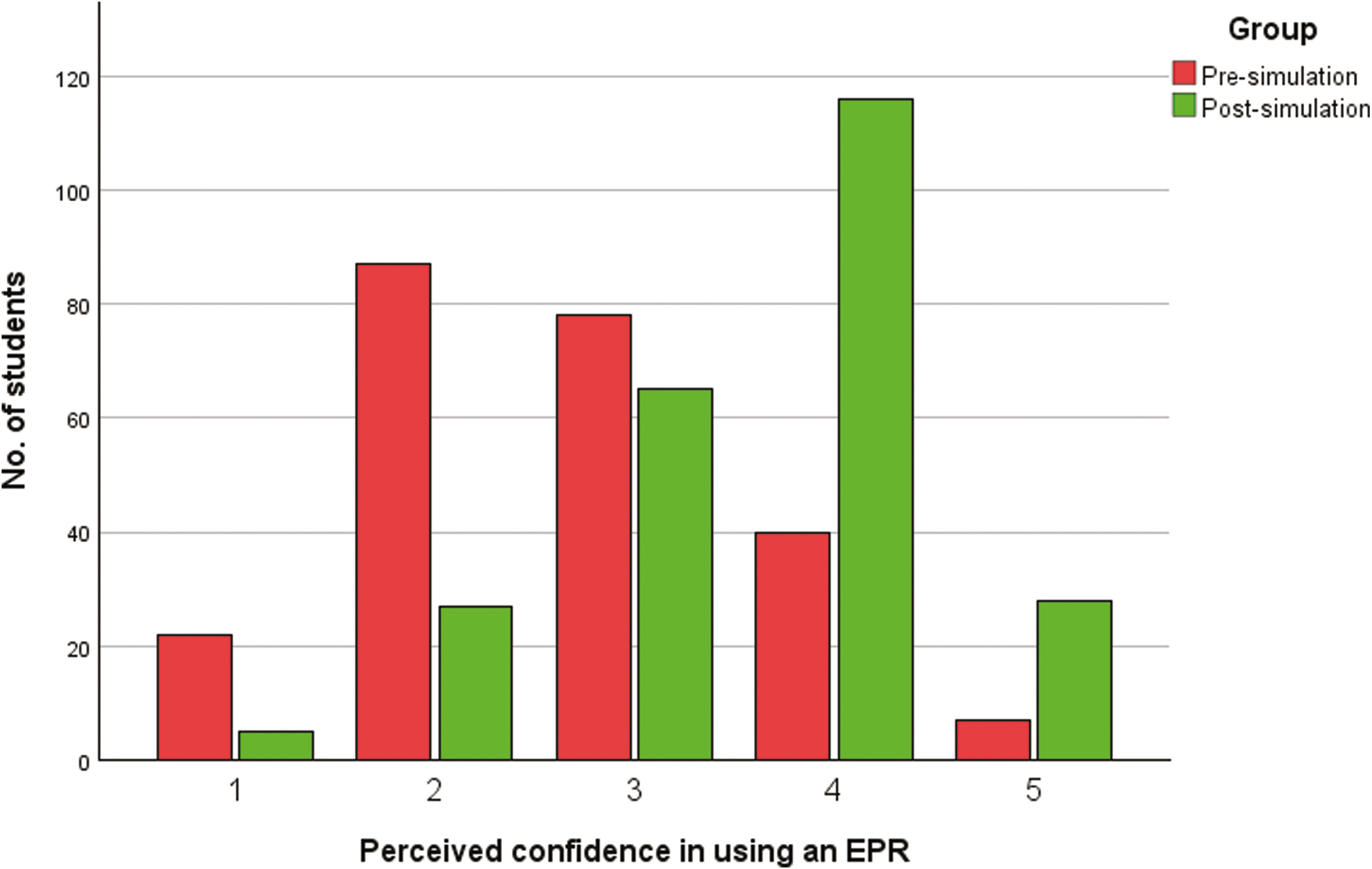

From 8th to 15th January 2024, 276 students and 43 faculty members participated in ‘WardSim’. Pre-session and post-session surveys were obtained from 234 and 244 students, respectively, and post-session surveys from 37 faculty members. Student responses demonstrated a statistically significant increase in mean confidence scores across all areas. This included confidence with job prioritisation (2.6 vs 3.6, p < 0.001), documentation (2.7 vs 3.4, p < 0.001), communication (2.5 vs 3.6, p < 0.001), teamwork (3.2 vs 3.9, p < 0.001), preparedness for foundation training (2.5 vs 3.3, p < 0.001), EPR usage (2.7 vs 3.6, p < 0.001; Figure 1), electronic prescribing (2.6 vs 3.6, p < 0.001) and preparing discharges (2.7 vs 3.2, p < 0.001). Overall, students found the integration of Nervecentre into ‘WardSim’ useful (mean = 4.6) and easy to use (mean = 3.9), the latter mirrored by faculty (mean = 4.4). In addition, faculty felt that students engaged well with using Nervecentre and thought it to be educationally beneficial (mean = 4 and 4.7, respectively).

Comparison of confidence using an EPR system pre- and post-simulation.

Analysis of free-text comments generated four major themes on the simulated Nervecentre: fidelity, utility, familiarity and prescribing.

Fidelity: Many of the student comments suggested it represented a more realistic and accurate representation of clinical practice.

Utility: Students consistently stated it was convenient and useful, especially to track patients and remain on task.

Familiarity: Being able to practise using Nervecentre in a simulated environment fostered greater awareness and confidence in using it clinically.

Prescribing: The ability to practise electronic prescribing of critical medications in a safe environment was particularly beneficial.

One free-text question asked about what could be improved with the simulated Nervecentre. The results are summarized in Table 1.

| Training | Students wanted more training prior to using Nervecentre in ‘WardSim’. Some students had limited experience with Nervecentre due to their placements being outside of the tertiary teaching hospital. |

| Requests/results | Students would have preferred online investigation requests (i.e. CT/bloods) to be available and to see the results in real time |

| Devices | Students wanted more computers/tablets to access Nervecentre and found it difficult to use the web-based browser version on the tablets |

| Prescribing | Students would like to see if/when medication doses had been administered |

| More opportunities | Students wanted more simulation sessions with Nervecentre incorporated |

Currently, the scope to integrate an EPR into simulation training is restricted by the lack or limited quality of such systems. We have demonstrated success in embedding an EPR into undergraduate simulation training. Our results show it can improve the simulation fidelity and provide valuable EPR training for application to clinical practice. This has the scope for further development in terms of in-programme features such as an integrated request system for ordering investigations. Locally, we are looking to incorporate an Nervecentre app into simulation training which seeks to rectify the issues students had using the web-based browser on tablets and will include the ability to digitally request investigations. We are also in the process of expanding the simulated EPR into other simulations and developing a student reference book. Furthermore, we are looking to introduce the electronic prescribing aspect of the simulated EPR into our pharmacology teaching block. We hope that these measures will enable students to experience it earlier in their training and should mitigate the issue we found around familiarity of Nervecentre prior to ‘WardSim’. In addition, we are also incorporating the EPR into local postgraduate simulation training. It could be argued that this is an even more important application of the Nervecentre simulated EPR as this will encompass a multidisciplinary cohort of healthcare practitioners actively using EPR as part of their clinical roles. The current disconnect between their professional practice and simulated learning may have an even greater effect on fidelity and engagement, which the simulated EPR could alleviate.

Overall, we advise simulation educators to consider embedding EPR into both undergraduate and postgraduate training to improve translation of learning from the simulated to the clinical environment and promote research in this important area. To facilitate this, we encourage other EPR developers to design customisable simulated packages to bridge the gap between simulation training and clinical practice.

The authors wish to acknowledge the Nervecentre team for the development of the simulated EPR environment and for the ongoing help in improving the user interface for medical training.

AB, ED and MM conceptualized the study innovation. CG, DF, AB and MM were responsible for design, data collection, analysis and interpretation of the results. All authors significantly contributed to manuscript preparation.

No direct funding was received, but support was received from Nervecentre in allowing the use of the Nervecentre software for teaching.

Available on request.

None declared.

The Nervecentre team adapted the local EPR to be used in a simulated capacity. None of the authors have any affiliation with Nervecentre.

1.

2.

3.

4.

5.

Tina Wu 1, Andrew R. Coggins 2

[1]

[2]

Simulated medical records guide experiences in simulation by providing key clinical information [1]. As educators, we observe a range of interactions with medical records in team-based simulation. For example, facilitators may reference them as prompts, novice learners apply new skills in gathering information, and senior clinicians filter information quickly to make complex decisions. It naturally follows that participants should have access to familiar and realistic medical records to allow understanding of scenario context and communication of the patient’s story.

Simulated medical records are usually paper based rather than electronic, which may conflict with the participants’ normal experience. This incongruence risks distraction and may limit ‘suspension of disbelief’ and scenario engagement, potentially inhibiting transference of learning [1]. While electronic medical record (EMR) use in simulated system testing and individual learning is widely described, there is little reporting of its use in team-based simulation [2–4]. Therefore, this pilot study describes and evaluates a low-cost EMR interface designed for team-based simulation.

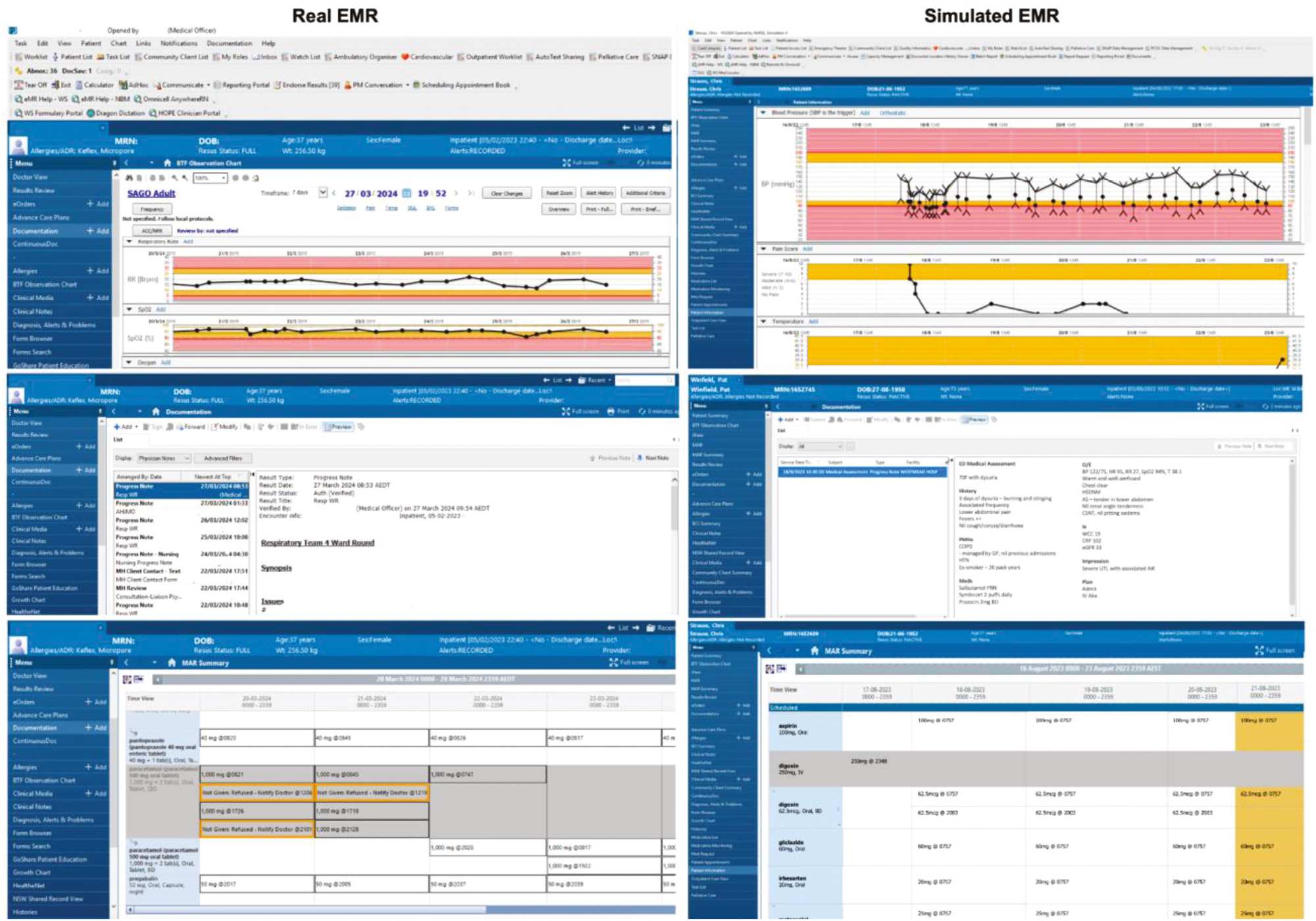

Four commonly used simulation scenarios were designed using slide presentation software with consultation of local simulation stakeholders. High-resolution screenshots of the current EMR (CernerTM) were captured and patient identifiers were removed (Figure 1). Substitute patient information was transferred from soft copies of existing paper cases into the new simulated EMR, including demographic information, documentation, laboratory results, medications chart and observations chart.

Screenshots of the simulated electronic medical records (EMRs) and the institution equivalent (Cerner™). Comparison of real (left) and simulated (right) EMRs with three sample screen captures comparing the observation chart (top), documentation (middle) and medications chart (bottom).

Populated sections were hyperlinked to facilitate realistic interaction, while non-populated areas were hyperlinked to the current slide to prevent unwanted slide progression. The final version is available as a free open-access education (FOAMed) file at www.emergencypedia.com/EMR.

To evaluate the simulated EMR, we enrolled in consecutive simulation courses that used four eligible scenarios from January to March 2024. Paired rooms ran the same scenario after being allocated by location to either paper record or EMR. Participants (faculty and learners) were then invited to complete a brief simulation survey including questions evaluating the medical record (Table 1). Outcomes included clinical role (doctor, nurse and staff) and experience (years), 7-point Likert scale questions (Table 1) and optional commentary. Likert scale questions were analysed with Mann–Whitney non-parametric testing. Given the limited quantity and detail of free-hand responses, qualitative analysis was performed using a manifest content approach to identify repeated ideas. These concepts were then evaluated against the data iteratively using deductive analysis to form thematic conclusions (Table 1).

| 7-Point Likert questions in survey (Median, IQR) | Electronic medical records (n = 44) | Paper medical records (n = 32) | Mann–Whitney test, p-value |

|---|---|---|---|

| 1. Satisfactory simulation. Overall, the medical records satisfactorily simulated clinical patient records, for example, observations, medication chart and documentation. | 6 (IQR 5–6) | 5 (IQR 4–6) | 0.001a |

| 2. Clinical usefulness of the records. The patient records represented useful clinical information for the simulation. | 6 (IQR 5–6) | 5 (IQR 5–6.5) | 0.261 |

| 3. Contribution to learning. The patient records in their form contributed positively to my experience and learning in the simulation. | 6 (IQR 5–6) | 5 (IQR 4–7) | 0.244 |

| 4. Overall scenario representation. Overall, the simulation satisfactorily represented the clinical scenario. | 6 (IQR 5–7) | 6 (IQR 5–7) | 0.987 |

| Thematic qualitative feedback | Electronic medical records (EMR) | Paper medical records | |

| Realism and fidelity | Participants commented on the highly ‘realistic’ nature of the simulated EMR, which ‘looked like the real thing’. This extended to authentic equipment like the ‘Workstation on Wheels’. | Participants had mixed reactions about realism with some commenting paper records ‘do not represent reality’ while others suggested they were a ‘good representation’. | |

| User experience and functionality | There was positive feedback from participants who reported the simulated EMR ‘worked well’ and was ‘familiar’. An instructor noted this solution removed the possible legal implications of using a ‘fake patient on the real EMR’. | Participants were ‘not used to’ paper medical records and found them ‘very awkward’, given that clinical settings now use electronic records. | |

| Contribution to learning | Participants did not directly make conclusions about the value of the electronic records to the process of learning, but they were described as ‘appropriate’ given the use of EMR clinically. Some participants found the simulated EMR was not utilized in scenarios that were already ‘overwhelming’. | Participants found that paper records were able to ‘sufficiently provide a narrative’, which was required for patient context. It was suggested that paper records make the simulation ‘process easier’ despite not reflecting ‘real life’. | |

| Future improvements | Participants suggested future improvements including access to resources like ‘guidelines or drug’ databases, as well as versions to simulate future EMR systems. | Participants commented on confusing errors on the paper records, including mismatched names and previously charted medications. | |

a Denotes statistically significant value p < 0.05.

Approximately one-third of course participants completed a survey (n = 76). Of those, the majority had a medical background (n = 63). There was a wide range of clinical experience (nil experience n = 24). Table 1 summarizes the major outcomes of the study. There was a statistically significant improvement in participant perception of how satisfactorily patient medical records were simulated with the EMR version but no difference in perceived clinical usefulness, contribution to learning or overall realism of the scenario.

Key qualitative themes emerged around medical record realism, user experience, contribution to learning and future improvement. The simulated EMR was rated highly in terms of realism and user experience, whereas paper records were found to be ‘awkward’ and unfamiliar. Regarding learning, participants did not directly comment on the contributions of the EMR but found paper records to be ‘sufficient’ for learning, despite not accurately representing clinical norms. Improvements were suggested by users such as discrepancies in patient names for paper and future functionality for EMR.

Table 1 summarizes a quantitative and qualitative comparison of electronic and paper medical records as evaluated by our post-simulation survey. EMRs more satisfactorily simulated records compared to paper, with a statistically significant higher median score. While EMR had a higher median score reported for clinical usefulness and contributing to learning, this was not statistically significant. There was no difference in the overall scenario representation. Key themes of the qualitative feedback in terms of realism and fidelity, user experience and functionality, contribution to learning, and future improvements, are discussed by comparing the EMR to paper records.

Quantitative and qualitative evaluation showed this simulated EMR improved realism and engagement. We observed that the impact of realism on learning was more complex, which previous researchers have suggested to be due to the increased cognitive load that enhanced realism may impose [5]. It is thus important to design simulations with appropriate equipment selection, including medical records, to achieve the desired learning outcomes.

The key strengths of the simulated EMR are its simple, low-cost design using accessible resources. When used with redundant institutional Workstations on Wheels, its realism was further enhanced. Additionally, this resource does not require specific technical proficiencies and is now easily downloaded and modifiable. It avoids the use of the institutional EMR for simulation, a likely suboptimal solution given community concerns for potential inadvertent access to real patient records [6]. Cost and time resources are also a barrier because institutional EMRs require dedicated network computers and detailed governance.

The simulated EMR and observational evaluation have limitations. The appearances (Figure 1) are superficially realistic but not fully interactive. While it passively provides clinical information, learners cannot document, prescribe or order investigations directly. This did not detract from participant experiences reflected in Table 1, likely because verbal prescribing is acceptable in emergency situations and documentation is usually delayed until the clinical situation is stable. However, in scenarios requiring urgent imaging or pathology ordering, the simulated EMR would not realistically represent the required clinical actions. This is also a limitation of paper medical records.

Regarding the study evaluation, the sample size and observational methods limit the external validity and generalizability of the findings. Readers should consider whether this solution is appropriate for their context. To increase the reach of the tool, we plan to expand versions of EMR beyond Cerner™ to others such as Epic™.

In summary, we observed that learners and educators using a new simulated EMR reported it realistically portrayed medical records when compared with paper. This EMR solution is a low-cost and accessible way to improve realism in simulation, and it is now an open access and modifiable resource for simulation educators.

Thank you to the Westmead SiLECT faculty for their role in implementing and evaluating this new simulated EMR, and to Karen Byth for her assistance with statistical analysis.

None declared.

This study received no external funding.

None declared.

None declared.

None declared.

1.

2.

3.

4.

5.

6.